Text-to-image generation has become one of the most exciting frontiers in artificial intelligence, enabling the creation of vivid and detailed images from simple textual descriptions. While models like DALL·E, Stable Diffusion, and Imagen have made remarkable progress, challenges remain in making these systems more controllable, versatile, and aligned with user intent.

A recent paper titled “Multimodal Instruction Tuning for Text-to-Image Generation” (arXiv:2506.09999) introduces a novel approach that significantly enhances text-to-image models by teaching them to follow multimodal instructions—combining text with visual inputs to guide image synthesis. This blog post unpacks the key ideas behind this approach, its benefits, and its potential to transform creative AI applications.

The Limitations of Text-Only Prompts

Most current text-to-image models rely solely on textual prompts to generate images. While effective, this approach has several drawbacks:

- Ambiguity: Text can be vague or ambiguous, leading to outputs that don’t fully match user expectations.

- Limited Detail Control: Users struggle to specify fine-grained aspects such as composition, style, or spatial arrangements.

- Single-Modality Constraint: Relying only on text restricts the richness of instructions and limits creative flexibility.

To overcome these challenges, integrating multimodal inputs—such as images, sketches, or layout hints—can provide richer guidance for image generation.

What Is Multimodal Instruction Tuning?

Multimodal instruction tuning involves training a text-to-image model to understand and follow instructions that combine multiple input types. For example, a user might provide:

- A textual description like “A red sports car on a sunny day.”

- A rough sketch or reference image indicating the desired layout or style.

- Additional visual cues highlighting specific objects or colors.

The model learns to fuse these diverse inputs, producing images that better align with the user’s intent.

How Does the Proposed Method Work?

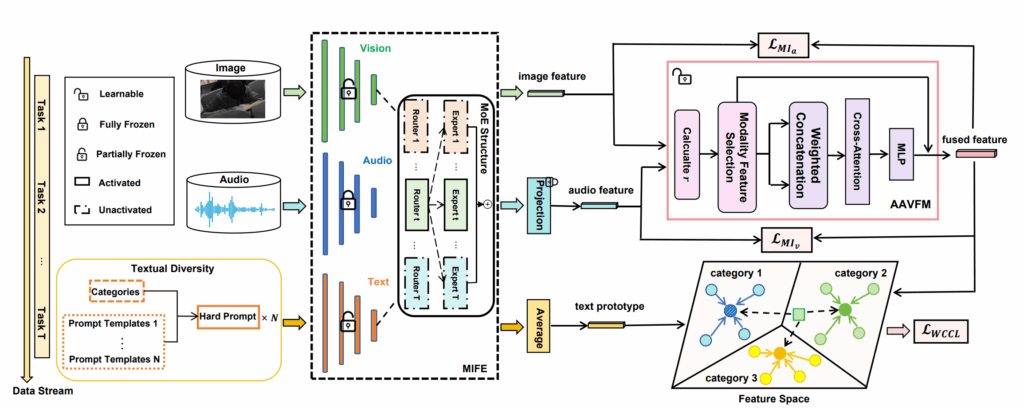

The paper presents a framework extending diffusion-based text-to-image models by:

- Unified Multimodal Encoder: Processing text and images jointly to create a shared representation space.

- Instruction Tuning: Fine-tuning the model on a large dataset of paired multimodal instructions and target images.

- Flexible Inputs: Allowing users to provide any combination of text and images during inference to guide generation.

- Robustness: Ensuring the model gracefully handles missing or noisy modalities.

Why Is This Approach a Game-Changer?

- Greater Control: Users can specify detailed instructions beyond text, enabling precise control over image content and style.

- Improved Alignment: Multimodal inputs help disambiguate textual instructions, resulting in more accurate and satisfying outputs.

- Enhanced Creativity: Combining modalities unlocks new creative workflows, such as refining sketches or mixing styles.

- Versatility: The model adapts to various use cases, from art and design to education and accessibility.

Experimental Insights

The researchers trained their model on a diverse dataset combining text, images, and target outputs. Key findings include:

- High Fidelity: Generated images closely match multimodal instructions, demonstrating improved alignment compared to text-only baselines.

- Diversity: The model produces a wider variety of images reflecting nuanced user inputs.

- Graceful Degradation: Performance remains strong even when some input modalities are absent or imperfect.

- User Preference: Human evaluators consistently favored multimodal-guided images over those generated from text alone.

Real-World Applications

Multimodal instruction tuning opens exciting possibilities across domains:

- Creative Arts: Artists can provide sketches or style references alongside text to generate polished visuals.

- Marketing: Teams can prototype campaigns with precise visual and textual guidance.

- Education: Combining visual aids with descriptions enhances learning materials.

- Accessibility: Users with limited verbal skills can supplement instructions with images or gestures.

Challenges and Future Directions

Despite its promise, multimodal instruction tuning faces hurdles:

- Data Collection: Building large, high-quality multimodal instruction datasets is resource-intensive.

- Model Complexity: Handling multiple modalities increases training and inference costs.

- Generalization: Ensuring robust performance across diverse inputs and domains remains challenging.

- User Interfaces: Designing intuitive tools for multimodal input is crucial for adoption.

Future research may explore:

- Self-supervised learning to reduce data needs.

- Efficient architectures for multimodal fusion.

- Extending to audio, video, and other modalities.

- Interactive systems for real-time multimodal guidance.

Conclusion: Toward Smarter, More Expressive AI Image Generation

Multimodal instruction tuning marks a significant advance in making text-to-image models more controllable, expressive, and user-friendly. By teaching AI to integrate text and visual inputs, this approach unlocks richer creative possibilities and closer alignment with human intent.

As these techniques mature, AI-generated imagery will become more precise, diverse, and accessible—empowering creators, educators, and users worldwide to bring their visions to life like never before.

Paper: https://arxiv.org/pdf/2506.09999

Stay tuned for more insights into how AI is reshaping creativity and communication through multimodal learning.